Why technologists need to find their Oppenheimer

This week, tech leaders (and not a few chancers) roll into town for London Tech Week. Across discussions about artificial intelligence and the fourth Industrial Revolution, you will hear talk of technology as brilliant, enabling and representing progress. You may hear mention that it is disruptive and how it supports frictionless interactions with customers.

That word is about the only indication you are likely to find that technology has social and economic consequences. From Silicon Valley eastwards, frictionless actually means in the absence of humans. Which really means replacing physical employees with robotic processes, automation or AI.

That word is about the only indication you are likely to find that technology has social and economic consequences. From Silicon Valley eastwards, frictionless actually means in the absence of humans. Which really means replacing physical employees with robotic processes, automation or AI.

What is remarkable whenever tech people gather together or decide to launch their latest innovations is how they appear to operate in isolation from the real world. Smart technology may connect to the internet, but its creators seem disconnected from humanity. How else to explain decisions they make which have potentially serious implications, yet could be simply avoided by thinking from the perspective of an ordinary person.

The recent Which? examination of connected devices revealed a prime example of this - the Philips bluetooth toothbrush which asked for access to the microphone on users’ smartphones. The device links to an app which monitors brushing habits and frequency, no doubt offering to compare it to how a community of other users get on as is now common with such tools. Just as common is for app developers to hook into as many data collection tools as they can, including location via GPS, cameras and, as in this case, microphones.

According to the manufacturer, this facility is not used. Which raises the question, why enable it? Not only does it run directly counter to GDPR requirements to limit purpose and minimise data, it seems profoundly intrusive.

Philosophical discussion amounts to a few slogans on a T-shirt.

When challenged about this type of activity, the standard response of the tech world is to claim that consumers do not care - they are buying connected devices and making use of them, so they must be happy with the experience. On the back of this assumption a wave of overt surveillance devices - from voice activated assistants to smart home security - is being driven into the home.

But history is littered with technologies that had negative consequences which had either not been foreseen or which a simple failure to think like a human being could have avoided. One explanation for this blindness to the impact when a product lands is the way technologists are taught. Ethical modules are entirely absent from academic curricula and philosophical discussion amounts to a few slogans on a T-shirt.

To pick on one example currently seeking to raise funds via Kickstarter, FOCI is a wearable based on decades of neuro-physiological research. This has found a correlation between breathing patterns and six states of mind. Prime among those is flow, the point at which we are focused and able to work at our optimum output. As the pitch points out, we generally get distracted every three minutes. But it also calculates this as 31 days of lost productivity per year.

To pick on one example currently seeking to raise funds via Kickstarter, FOCI is a wearable based on decades of neuro-physiological research. This has found a correlation between breathing patterns and six states of mind. Prime among those is flow, the point at which we are focused and able to work at our optimum output. As the pitch points out, we generally get distracted every three minutes. But it also calculates this as 31 days of lost productivity per year.

The device works by monitoring breathing rate and then vibrating to nudge the user back into flow. While its creators have a starting point of helping their fellow students to work better, it is also easy to see commercial applications. Airline pilots need to be a in a state of flow during the most critical stages of a journey, such as take-off and landing. From there, it is easy to see how military applications could be developed.

Flow is not something you can sustain for eight hours a day, five days a week.

But a likely big investor would be logistics companies like Amazon who are already monitoring their workers every movement as part of their journey towards having no human employees at all. This new device opens up a previously inaccessible realm to employers - the human mind. Yet as anybody knows who regularly needs to do extensive periods of concetrated work, from coding and maths to composing or acting, flow is not something you can sustain for eight hours a day, five days a week. The output which flow delivers is also rooted in those moments of distraction, including being either stressed or calm, when the brain does much of its work subsconsciously prior to it becoming accessible in a conscious state.

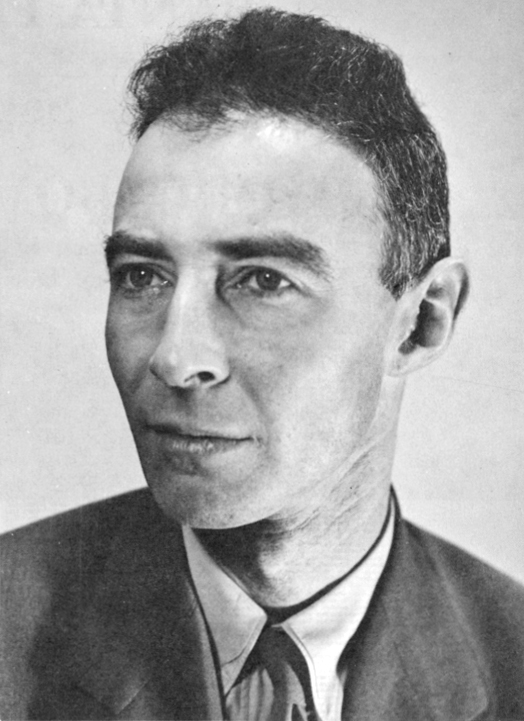

At the extreme end of the scale of this invasion of the human realm, we know only too well what happens when you develop a technology with no thought for its consequences. J. Robert Oppenhemier moved from theoretical physics to its practical application by way of the atomic bomb, only to regret the result and become a campaigner for nuclear weapons control, famously saying of his work, “I am become Death, the destroyer of worlds”.

It is to be hoped that today’s AI-enabled, data harvesting technologies are not capable of the same level of impact. Nevertheless, they will have some negatives consequences, ranging from the loss of jobs to the potential for unlimited surveillance of citizens, which need to be considered far earlier in the development and distribution of these tools, rather than waiting to see what happens post-adoption. You don’t have to be an ardent privacy campaigner or an off-grid marginal to believe that technology’s risks should be looked at with as much as their user interfaces get.

Did you find this content useful?

Thank you for your input

Thank you for your feedback

You may also be interested in

DataIQ is a trading name of IQ Data Group Limited

10 York Road, London, SE1 7ND

Phone: +44 020 3821 5665

Registered in England: 9900834

Copyright © IQ Data Group Limited 2024

David Reed

David Reed