Four principles to balance personalisation and data protection

Achieving personalisation using data while not overstepping boundaries on privacy is a moving target for organisations today and it is one that customers are still shaping opinions on and that businesses are trying to figure out.

I love a product or service that gives me what I want, rather than an offering that feels watered-down and generic. Let’s face the facts, this is something we have become accustomed to and now just expect!

I want my music or movie streaming service to show me content I want to listen to or view at the right time of day (after the kids are asleep) and in the right location (not when I am sat in a meeting). I expect my favourite clothing app or takeaway delivery platform to push notify me with sneakers I love and new Korean BBQ restaurants that have opened in my neighbourhood.

But I also don’t like the thought of big business pouring over my data and serving me up stuff unexpectedly or in the wrong context. I feel violated when a social media platform serves me an advert based on a random Google search I made earlier in the day and I feel weirded out when websites show me content based on some hazy inference that they have matched between my wife and my profile.

Regulation has also created resilience

With Coronavirus having transformed our world overnight, the privacy grounds are shifting once again and the dynamics between personal data privacy and public safety are being tested. Before the crisis, our society was on a trajectory of increasingly tight restrictions on how companies used customer data. Big tech had taken several stances against exploiting customer privacy through locking down third-party cookies. Regulation was also spreading, with California following Europe’s lead on data privacy legislation.

This was largely, if not exclusively driven by an awaking in the public sentiment to the rights we hold to our own data and how it is used. Coronavirus has been a massive catalyst in further educating society to the value of our data. The progress in protection and regulation over the last few years has created a resilience to the exploitation of data as we create solutions to aid us during this pandemic that use personal data in solutions, such as contact tracing and immunity passports.

So how do customer-facing businesses adapt to the paradox of delivering both privacy and personalisation? I don’t think we can completely answer this given the current state of change - as the situation evolves, so will the solutions we need to develop. For now, there are four principles that are a good place to start to help build trust with our customers and maintain secure and healthy relationships for the foreseeable future.

1. Put the customer in the driving seat

If we are building data-centric experiences, the customer needs more than just an auto-ticked check box to opt-in to the service. They need transparency on the categories of data we hold, what it is used for and the flexibility to turn off bits they are not comfortable sharing.

This needs to be clearly signposted and accessible for customers to view with minimal complexity (not hidden in the darkest corners of our privacy pages amongst legalese). Building this kind of functionality goes a long way to reassuring customers that we are open for honest and transparent business and we are not trying to hide something or mislead you into a choice you would not naturally have made.

2. Clearly articulate the value exchange

Our customers need to have clarity of understanding on the value that will be generated in return for their data. If a customer chooses to opt-in to location tracking or marketing cookies, they need to be able to understand the valuable personalised services this might unlock for them or the contribution their data makes to society at large.

The current pandemic is testing this value exchange principle greatly as we see whole nations beginning to be put into situations where they have no option but to give up their mobile location data if they are to enjoy freedom of movement again anytime soon. This is more worrisome in Asia compared with Europe and North America where anonymised approaches are being taken up.

There is great promise in API solutions soon to come from Google and Apple that protect personalised data while allowing for contact tracing apps to be developed. Nonetheless, amongst all this great innovation, I feel that Benjamin Franklin’s famous quote from the 1700s is just as relevant now as it was then and is worth keeping in mind: “Those who would give up essential liberty to purchase a little temporary safety deserve neither liberty nor safety.”

3. Combine explainability with brand trust

Delivering rich and contextual personalisation can be truly compelling if done correctly. Key to this, though, is explaining why we think the content we have chosen to serve up is appropriate and what led our algorithm to arrive at this decision.

The context of your current situation, events that have led up to where you are at, how others that are like you have reacted are all great things to use to enrich personalisation with explainability. Customers want and expect a personalised experience, but they will not relinquish their data to brands they don’t trust.

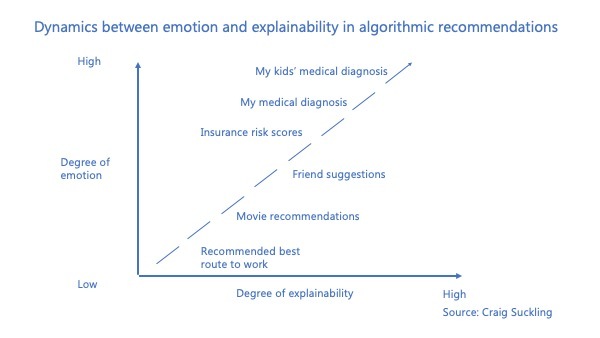

Solving for this requires data-powered digital features that come together to form a trust equation. This is a balance between AI explainability and consumer confidence where our customers are given the appropriate level of transparency into personalised actions alongside strong brand trust. The more highly-charged with emotion a recommendation is, the greater the degree of explainability that is required.

4. Don’t yield control to AI yet

Until we can get to a place where AI has true emotional intelligence, we will always run the risk of an algorithm serving up strange - or downright wrong - suggestions. Our AI algorithms are just not advanced enough yet to layer on contextual nuance, tone, inference, style and often some plain common sense.

To catch this, we need to be building the right insight capabilities around our models to be able to understand how they are working and to tweak and teach them with feedback. Having frameworks in place for human oversight is a necessary element for now in safeguarding how AI determines the right messaging to our customers. It might be that we will always need a balance between human and AI in determining the way we engage with our customer.

As we emerge from the current crisis into a remarkably different new normal, no-one knows how customer sentiment will have changed and how trust will have been impacted. More than ever, it will be critical for brands to build transparency and virtue into the experiences, products and services they create.

We now have a renewed appreciation for the fragility of life and how doing the right thing is indeed the right thing to do, no matter the cost. Our customers will expect this same treatment of their data and how we apply this into creating the tailored experiences we all love.

Happy trust building!

Craig Suckling, head of data and analytics group, IAG Loyalty

Did you find this content useful?

Thank you for your input

Thank you for your feedback

Next read

You may also be interested in

DataIQ is a trading name of IQ Data Group Limited

10 York Road, London, SE1 7ND

Phone: +44 020 3821 5665

Registered in England: 9900834

Copyright © IQ Data Group Limited 2024

Craig Suckling

Craig Suckling