Removing semantic bias to make AI fairer

Dr James Zou, an assistant professor of biomedical data science at Stanford University, has been researching gender and ethnic stereotypes found in word embeddings – a framework used in many AI tasks. However, human biases can be captured by these embeddings with potentially negative consequences. At a presentation at Imperial College London, Zou discussed stereotypes in word embeddings and a way to enable algorithms to identify its own biases.

What is a word embedding?

Word embedding is the framework in which English words are represented as vectors in one-dimensional or two-dimensional space. They are used in many AI tasks because, according to Zou, “it is much easier for the algorithm to understand the vectors than to understand the English word.” He went on to say that a useful way to think of word embeddings is as a dictionary that translates English words into vectors, and that what makes the embeddings useful is how the geometry of the vector captures the semantic relations between the corresponding words.

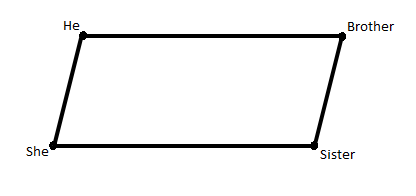

Though a simple representation, this is a word embedding.

Zou said that word embeddings are often used to solve analogies. An AI system with a word embedding would be able to complete the following: "Man" is to "king" as "woman" is to _________.

“We find that ‘queen’ among all the English words is the closest one and that’s what the embedding would use to complete this analogy,” said Zou. He explained that in the last four years, the machine learning community became very excited about embeddings which are now used by large companies like Google and Twitter as well as many academic groups.

How can biases and stereotypes be captured in word embedding?

Two years ago, Zou and his team started to look closely at embeddings and what biases or stereotypes might be present in them. They took some analogies and got some popular embeddings to solve them. Below are three of the analogies"

- "He" is to "doctor" as "she" is to __________.

- "He" is to "realist" as "she" is to ___________.

- "He" is to "computer programmer" as "she" is to _________.

The embeddings solved these analogies in a somewhat problematic way, with the words "nurse", "feminist" and "homemake"'. From this, someone with no experience of our society might infer that women are not doctors or computer programmers and that feminists are the antithesis of realists.

“The gender stereotypes are so intrinsically built into the embeddings, into the geometry that is effectively building its own dictionary. Definitions can have a lot of ramifications downstream for AI tasks such as translation and the targeting of adverts," said Zou.

“The gender stereotypes are so intrinsically built into the embeddings, into the geometry that is effectively building its own dictionary. Definitions can have a lot of ramifications downstream for AI tasks such as translation and the targeting of adverts," said Zou.

"Engineers, doctors, single people and hardworking people in Turkish texts refer to men."

In November 2017 in a Facebook post, Emre Şarbak illustrated the bias of Google Translate when he used to the tool to translate non-gendered Turkish sentences into English. He wrote: “Google Translate is basically acting like all engineers, doctors, single people, hardworking people, that are mentioned in Turkish texts, refer to men, and nurses, teachers, married people, unhappy people, lazy people refer to women.”

Researchers from Carnegie Mellon University conducted a study in 2015 which found that female job applicants were less likely to be shown adverts for highly paid positions compared to their male counterparts. Zou also conducted an experiment on this matter which found that gender does affect how highly the websites of computer science graduate students are ranked.

As Zou said: “Because the embeddings are used in all these applications, from rankings to recommendation systems to natural language processing, these subtle biases could have potentially harmful effects.”

How can biases and stereotypes in embeddings be reduced?

Zou and his team have come up with a way to reduce the stereotypes in the embeddings. They first had to classify whether a word would be gender neutral or gender definitional. For the words that are gender neutral, Zou looks to transform the vectors in a way that the gender subspace is minimised.

He said: “We can solve efficiently to find this optimal transformation. The debiasing operation is able to preserve the appropriate structures in embeddings and it is able to effectively reduce the stereotype.” He explained that after the debiasing process, when asked to solve "he" is to "doctor" as "she" is to _______, the missing word will be "doctor".

Zou said that now debiased embeddings are used by many large tech companies such as Google, Twitter, Microsoft and Facebook, as a part of their machine learning, and also in their diagnostic pipeline by their researchers and engineers. Unfortunately, it is not feasible to go through all the new machine learning algorithms that are coming out by the hour manually, as that would take a lot of time and effort and so would not be scalable.

How can an AI audit identify biases in embeddings?

However, Zou said that an AI audit system that uses machine learning to identify and remove biases would be scalable. This AI audit system would look at some of the internal representations of the original algorithms. Using the example of machine learning in digital medicine, he explained: “There’s the original classifier which could be a neural network or a random forest or a decision tree and that will look at, based on internal representation, whether there are specific sub-clusters of individuals or sub-populations that the original classifier is systematically making mistakes with.”

He said that the goal is for the auditor to learn which are the sub-clusters of individuals where the original classifier is biased towards and suggest improvements for those clusters, while not necessarily having any clear specifications of the characteristics of that sub-group, such as their race or gender.

"The auditor is very efficient at improving the accuracy and reducing the biases."

“We are able to show various mathematical guarantees that this auditor is very efficient at improving the overall accuracy and also reducing the biases of the different sub-populations simultaneously,” Zou explained. So while the problem of bias in sematic algorithms and the negative consequences are being uncovered, there is work being done to make AI more fair and less biased, starting with the removal of biases from word embeddings.

As Zou said: “You would like your dictionary to be a more objective version of the world. It would be problematic if your dictionary says a nurse has to be a woman and a computer programmer has to be a man.”

Did you find this content useful?

Thank you for your input

Thank you for your feedback

You may also be interested in

DataIQ is a trading name of IQ Data Group Limited

10 York Road, London, SE1 7ND

Phone: +44 020 3821 5665

Registered in England: 9900834

Copyright © IQ Data Group Limited 2024