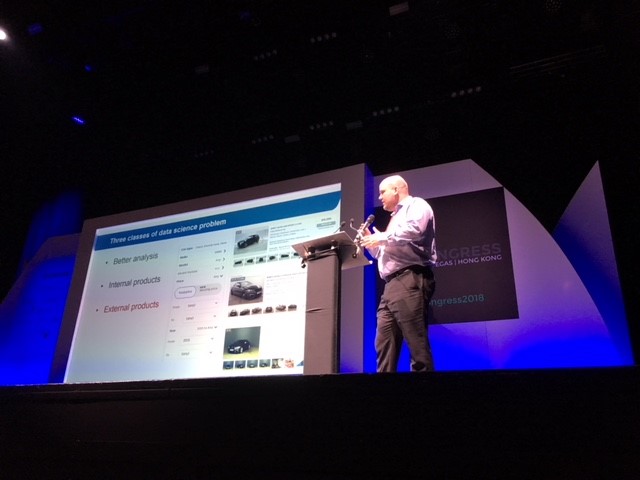

Lessons learnt from AutoTrader algorithms

Dr Peter Appleby is the head of data science at AutoTrader, having worked his way up from data scientist and lead data scientist positions. By going through the process of productionising algorithms, he learnt the important lessons of playing to one’s strengths and having one person take ownership of the entire pipeline. This is how he made those discoveries.

AutoTrader is a mid-size organisation of 800 people which Appleby described as agile because the product teams have delivery, tech and product leads within them.

"If we make changes to the model, we have to do it as soon as possible."

He and his team create models for internal and external audiences. However those that are public-facing need to be a very robust because they generate a large number of queries that could range from hundreds to millions per day. “If we make changes to the model, either in retraining or interrogating the algorithm, we have to make that change as soon as possible. If it suddenly changes from one day to the next, people mistrust the outputs,” he said.

He and his team create models for internal and external audiences. However those that are public-facing need to be a very robust because they generate a large number of queries that could range from hundreds to millions per day. “If we make changes to the model, either in retraining or interrogating the algorithm, we have to make that change as soon as possible. If it suddenly changes from one day to the next, people mistrust the outputs,” he said.

Appleby gave an example from AutoTrader of an algorithm which adjusted the price valuation of vehicles depending on their specifications and would subsequently award the motor with a ‘good’ or ‘great’ price sticker. “We’re looking at a car and we’re saying ‘OK, even if it is £1,000 more expensive than a similar car, as a package it is actually a better price because of the optional spec items that are on it’,” said Appleby.

He described the original spec-adjusted valuation engine in the form of a flow chart, with car data going in, being processed into data labelled in terms of spec items and the valuation coming out at the other end. Appleby explained that the data engineers were responsible for extracting data from the car adverts and marking it up with the specifications.

The data scientists were in charge of the training model that valued the spec items. Then the data engineers were involved again in writing the interrogation model that altered the coefficients and got interrogated by the API. The product people were at the end of the process in charge of writing the API and servicing that result as a product.

The creation and deployment of this model was not entirely smooth. “There were obvious gaps in responsibility that things could fall into and did. There’s no end-to-end ownership of the whole chain and this led to a number of problems, particularly with changes in the spec extraction model,” said Appleby.

As there was no clear end-to-end ownership, Appleby said they tended to focus on individual components instead of the whole chain and changes that were made had downstream consequences that they couldn’t handle. As a result of all of these factors, Appleby said this was: “the most complicated product that AutoTrader has ever produced.”

And so, they came up with some principles to solve those problems. The first was to find someone to take end-to-end ownership of the whole pipeline. “We need to have clear responsibilities of the ownership of the different sections. That can be shared ownership. That’s fine, as long as it’s clear who is responsible for what.”

"We want data scientists to do what they’re good at - discovery, model selection, training.”

Another was to play to their strengths. This meant that everyone in the team doing what they are best at. “We don’t want data scientists writing perform and productionised code that’s interrogated by an API. We want them to do what they’re good at which is the discovery, model selection, and training,” he said. They also decided to scrap the translation layer altogether.

Now there is just one code base and it is looked after by one person. “We are more confident that we are not going to get discrepancies between the training and the interrogation code.” The data engineers are still involved in loading and shifting data into the data lake or data warehouse, while the product team still does the API but now they are taking shared ownership of the actual model.

He said that data science sits in the middle like glue holding things together. “Having done the discovery, they are best placed to evaluate the output of the model on an ongoing basis and make decisions as to whether it’s still doing what we expected it to do.”

According to Appleby, with this simpler model they have a much better approach as gaps have been eliminated and they have a much more joined up view of the ecosystem.

Dr Peter Appleby was speaking at AI Congress London.

Did you find this content useful?

Thank you for your input

Thank you for your feedback

Next read

You may also be interested in

DataIQ is a trading name of IQ Data Group Limited

10 York Road, London, SE1 7ND

Phone: +44 020 3821 5665

Registered in England: 9900834

Copyright © IQ Data Group Limited 2024

David Reed

David Reed