In Siri we trust?

I overheard a curious conversation at the bus stop recently. Two friends were arguing about which route to take to get from Hammersmith to Hoxton. One seemed to know London quite well, the other not so much. The non-Londoner got their smartphone out and announced the route options and durations according to Google Maps and added that she has never used a paper tube map.

When asked why, she responded: “I trust the machine more than I trust my brain.” Having never heard such a statement before, I started questioning myself. How much trust do I put in machines over my own mind? How trustworthy are machines?

I am not very trusting, even of applications that I really enjoy using. I rabbit on to motorists about how great crowdsourcing navigation app Waze is at helping you to avoid traffic jams for short journeys. However, during long journeys, I will more often than not pull over and make sure that the app knows exactly where I am trying to go. Sending me east, even though my final destination is in the north west just seems counter-intuitive to me and I don’t trust Waze enough to follow it blindly for more than half an hour.

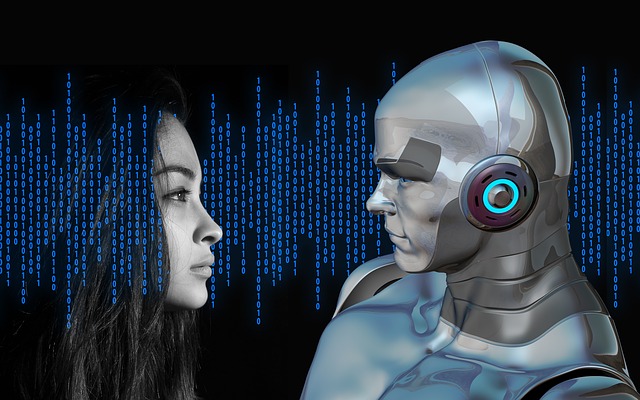

Can AI enable robots to do these things and gain our trust? In a 2016 white paper, IBM addressed this very issue. Learning to Trust Artificial Intelligence Systems says that, to reap the societal benefits of AI systems, we will first need to trust them and trust is earned through things behaving as we expect them to.

The paper recommends that every company have in place guidelines that govern the ethical management of their AI operations. These would restrict AI from doing business in ways that would be detrimental to society. It also states that, as trust is built upon accountability, the algorithms that underpin AI systems need to be as transparent as possible.

I would agree with that. If my hotel booking app returns a list of hotels I could stay in for my holiday, I want to know which of those hotels have paid to be bumped up the in the rankings. Plus, it would be a good thing if companies that develop AI algorithms were to take something like the Hippocratic Oath for doctors and promise to do no harm.

According to the Harvard Business Review, trust can only be built if systems behave in ways people expect them to. That means firms need to build-in trust-building dimensions from the outset of their AI projects if they want to have them accepted by users and customers. Without that, they will be relying on blind trust and faith that the machine is smarter than they are. What AI leaders need to be thinking about is, what will it take to build trust in the higher-powered?

Did you find this content useful?

Thank you for your input

Thank you for your feedback

Next read

You may also be interested in

DataIQ is a trading name of IQ Data Group Limited

10 York Road, London, SE1 7ND

Phone: +44 020 3821 5665

Registered in England: 9900834

Copyright © IQ Data Group Limited 2024

David Reed

David Reed